Salesforce to Database Account Broadcast

home

Broadcasts changes to accounts in Salesforce to a database in real time. The detection criteria, and fields to move are configurable. Additional systems can be easily added to enable notification of changes. Real time synchronization is achieved via outbound notifications or a configurable rapid polling of Salesforce.

This template uses watermarking functionality to ensure that only the most recent items are synchronized and batch to efficiently process many records at a time. A database table schema is included to make testing this template easier.

License Agreement

This template is subject to the conditions of the MuleSoft License Agreement. Review the terms of the license before downloading and using this template. You can use this template for free with the Mule Enterprise Edition, CloudHub, or as a trial in Anypoint Studio.

Use Case

As a Salesforce admin I want to synchronize Accounts from Salesforce to Database.

This template serves as a foundation for setting an online sync of Accounts from one Salesforce instance to database. Every time there is new Account or a change in an already existing one, the integration polls for changes in the Salesforce source instance and is responsible for updating the Account on the target database table.

Requirements have been set not only to be used as examples, but also to establish a starting point to adapt your integration to your requirements.

As implemented, this template leverages the Mule batch module and Outbound messaging.

The batch job is divided into Process and On Complete stages.

The integration is triggered by a scheduler defined in the flow that is going to trigger the application, querying newest Salesforce updates/creations matching a filter criteria and executing the batch job.

During the Process stage, each Salesforce Account is filtered depending on if it has an existing matching account in the database

The last step of the Process stage groups the Accounts and inserts or updates them in database.

Finally during the On Complete stage the Template logs output statistics data into the console.

The integration could be also triggered by HTTP inbound connector defined in the flow that is going to trigger the application and executing the batch job with received message from Salesforce source instance.

Outbound messaging in Salesforce allows you to specify that changes to fields within Salesforce can cause messages with field values to be sent to designated external servers.

Outbound messaging is part of the workflow rule functionality in Salesforce. Workflow rules watch for specific kinds of field changes and trigger automatic Salesforce actions. In this case sending accounts as an outbound message to Mule HTTP Listener, which further process this message and create Accounts in a database.

Considerations

To make this template run, there are certain preconditions that must be considered. All of them deal with the preparations in both source (Salesforce) and destination (Database) systems, that must be made for the template to run smoothly.

Failing to do so can lead to unexpected behavior of the template.

This template illustrates the broadcast use case between Salesforce and a Database, thus it requires a Database instance to work.

The template comes packaged with a SQL script to create the Database table that it uses. It is the user responsability to use that script to create the table in an available schema and change the configuration accordingly. The SQL script file can be found in account.sql in src/main/resources/

DB Considerations

This template uses date time or timestamp fields from the database to do comparisons and take further actions. While the template handles the time zone by sending all such fields in a neutral time zone, it cannot handle time offsets. (Time offsets are time differences that may surface between date time and timestamp fields from different systems due to a differences in each system's internal clock.)

Take this in consideration and take the actions needed to avoid the time offset.

As a Data Destination

There are no considerations with using a database as a data destination.

Salesforce Considerations

Here's what you need to know about Salesforce to get this template to work:

- Where can I check that the field configuration for my Salesforce instance is the right one? See: Salesforce: Checking Field Accessibility for a Particular Field.

- Can I modify the Field Access Settings? How? See: Salesforce: Modifying Field Access Settings.

As a Data Source

If the user who configured the template for the source system does not have at least read only permissions for the fields that are fetched, then an InvalidFieldFault API fault displays.

java.lang.RuntimeException: [InvalidFieldFault [ApiQueryFault

[ApiFault exceptionCode='INVALID_FIELD'

exceptionMessage='Account.Phone, Account.Rating, Account.RecordTypeId,

Account.ShippingCity

^

ERROR at Row:1:Column:486

No such column 'RecordTypeId' on entity 'Account'. If you are attempting

to use a custom field, be sure to append the '__c' after the custom

field name. Reference your WSDL or the describe call for the appropriate

names.'

]

row='1'

column='486'

]

]Run it!

Simple steps to get this template running.

See below.

Running On Premises

In this section we help you run this template on your computer.

Where to Download Anypoint Studio and the Mule Runtime

If you are new to Mule, download this software:

Note: Anypoint Studio requires JDK 8.

Importing a Template into Studio

In Studio, click the Exchange X icon in the upper left of the taskbar, log in with your Anypoint Platform credentials, search for the template, and click Open.

Running on Studio

After you import your template into Anypoint Studio, follow these steps to run it:

- Locate the properties file

mule.dev.properties, in src/main/resources. - Complete all the properties required as per the examples in the "Properties to Configure" section.

- Right click the template project folder.

- Hover your mouse over

Run as. - Click

Mule Application (configure). - Inside the dialog, select Environment and set the variable

mule.envto the valuedev. - Click

Run.

Once you have imported your template into Anypoint Studio you need to follow these steps to run it:

- Locate the properties file

mule.dev.properties, in src/main/resources. - Fill out all the properties required as per the examples in the section Properties to be configured.

- Add a dependency for your Database driver to the pom.xml file or simply add external JAR file to the build path and rebuild project.

- Configure GenericDatabaseConnector in the Global Elements section of the configuration flow to use your database specific driver. The classpath to the driver needs to be supplied here.

- By default this template relies on existing table Account in the database of your choice, so it performs SQL statements against this table, but feel free to customize prepared statements to use different database table or columns.

- Once that is done, right click your template project folder.

- Hover your mouse over

"Run as". - Click

"Mule Application".

Running on Mule Standalone

Update the properties in one of the property files, for example in mule.prod.properties, and run your app with a corresponding environment variable. In this example, use mule.env=prod.

Running on CloudHub

When creating your application in CloudHub, go to Runtime Manager > Manage Application > Properties to set the environment variables listed in "Properties to Configure" as well as the mule.env value.

While creating your application on CloudHub (Or you can do it later as a next step), you need to go to Deployment > Advanced to set all environment variables detailed in Properties to Configure as well as the mule.env.

Follow other steps defined here and once your app is all set and started, there is no need to do anything else. Every time a Account is created or modified, it will be automatically synchronised to supplied database table.

Once your app is all set and started and switched for outbound messaging processing, you will need to define Salesforce outbound messaging and a simple workflow rule. This article will show you how to accomplish this.

The most important setting here is the Endpoint URL which needs to point to your application running on Cloudbhub, eg. http://yourapp.cloudhub.io:80. Additionaly, try to add just few fields to the Fields to Send to keep it simple for begin.

Once this all is done every time when you will make a change on Account in source Salesforce org. This account will be sent as a SOAP message to the Http endpoint of running application in Cloudhub.

Deploying a Template in CloudHub

In Studio, right click your project name in Package Explorer and select Anypoint Platform > Deploy on CloudHub.

Properties to Configure

To use this template, configure properties such as credentials, configurations, etc.) in the properties file or in CloudHub from Runtime Manager > Manage Application > Properties. The sections that follow list example values.

Application Configuration

Application Configuration

- http.port

9090 - page.size

200 - scheduler.frequency

10000 - scheduler.startDelay

100 - watermark.default.expression

YESTERDAY - trigger.policy

push|poll

Database Connector Configuration

- db.host

localhost - db.port

3306 - db.user

user-name1 - db.password

user-password1 - db.databasename

dbname1

If it is required to connect to a different Database there should be provided the jar for the library and changed the value of that field in the connector.

Salesforce Connector Configuration

- sfdc.a.username

joan.baez@orgb - sfdc.a.password

JoanBaez456 - sfdc.a.securityToken

ces56arl7apQs56XTddf34X

API Calls

Salesforce imposes limits on the number of API Calls that can be made. Therefore calculating this amount may be an important factor to consider. Account Broadcast Template calls to the API can be calculated using the formula:

1 + X + X / 200

X is the number of Accounts to be synchronized on each run.

Divide by 200 because, by default, Accounts are gathered in groups of 200 for each Upsert API Call in the commit step. Also consider that this calls are executed repeatedly every polling cycle.

For instance if 10 records are fetched from origin instance, then 12 API calls will be made (1 + 10 + 1).

Customize It!

This brief guide provides a high level understanding of how this template is built and how you can change it according to your needs. As Mule applications are based on XML files, this page describes the XML files used with this template. More files are available such as test classes and Mule application files, but to keep it simple, we focus on these XML files:

- config.xml

- businessLogic.xml

- endpoints.xml

- errorHandling.xml

config.xml

This file provides the configuration for connectors and configuration properties. Only change this file to make core changes to the connector processing logic. Otherwise, all parameters that can be modified should instead be in a properties file, which is the recommended place to make changes.

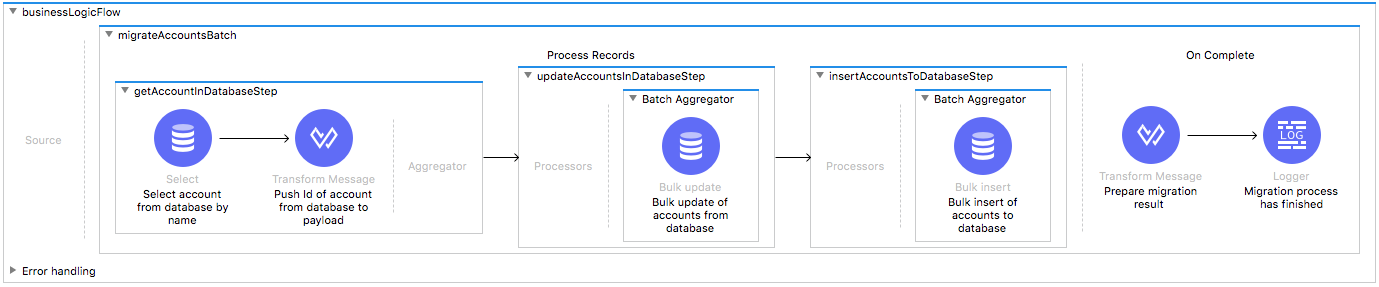

businessLogic.xml

Functional aspect of the template is implemented on this XML, directed by a batch job that will be responsible for creations/updates. The several message processors constitute four high level actions that fully implement the logic of this template:

- During the Input stage the Template queries Salesforce for all the existing Accounts that match the filtering criteria.

- During the Process stage, each Salesforce Account is checked by name against the Database, if it has an existing matching objects in database.

- The choice routing element decides whether to perform update operation on selected database columns or to perform insert operation

- Finally during the On Complete stage the Template logs output statistics data into the console.

endpoints.xml

This file is conformed by three Flows.

The first one we'll call it push flow. This one contains an HTTP endpoint that will be listening for Saleforce's outbound messages. Each of them will be processed and thus update/create Accounts, and then executing the batch job process.

The second one we'll call it scheduler flow. This one contains the Scheduler endpoint that will periodically trigger sfdcQuery flow and then executing the batch job process.

The third one we'll call it sfdcQuery flow. This one contains watermarking logic that will be querying Salesforce for updated/created Accounts that meet the defined criteria in the query since the last polling. The last invocation timestamp is stored by using Objectstore Component and updated after each Salesforce query.

The property trigger.policy is the one in charge of defining from which endpoint the Template will receive the data. When the push policy is selected, the http inbound connector is used for Salesforce's outbound messaging and polling mechanism is ignored. The property can only assume one of two values push or poll any other value will result in the Template ignoring all messages.

errorHandling.xml

This file handles how your integration reacts depending on the different exceptions. This file provides error handling that is referenced by the main flow in the business logic.